Remote Control

Over the summer I took a little burn-out hiatus to reset and figure out what exactly I’d like to do next. It was fantastic timing. I found myself joining the denizens of tech workers navigating a contracting market and dystopian levels of HR automation.

A friend of mine was working for a start-up focused on cybersecurity that was looking for product managers. The one thing I’d figured out about what I wanted to do next was that I have a fundamental love of detailed complexity. I’m the PM who shines when requirements are gnarly, stakeholders are chaotic, and the product offers real utility. I’d spent my entire career thinking of how to help humans and machines talk to each other, I’d never spent a lot of time considering how machines talk to each other. It seemed like this could be a perfect fit.

A week or so in, a colleague pings me to have a call over Slack. She tells me that there’s this software called ActiveTrack on everyone’s computer and the CEOs look at it to see how many hours people are working daily. She had just left a meeting about the QA team’s underperformance (they were clocking ~30 hours not the expected 40+).

I started laughing. Of all the things for any leadership team, and this team in particular, to be focused on, it was just bananas. But they were quite serious. For the remainder of my time there, managers would get feedback that their employees were underperforming based on their time in active track.

I’m not naive and have always assumed that any computer I use for work is heavily monitored for anomalies and activity is logged in case of any incident. However, I’ve never heard of a company actually concerning themselves with how much time my computer is ‘active’. There was even controversy in defining what counts as active. For example, many of us were shocked to learn that Slack doesn’t count toward activity.

All of our team conversations hashing out requirements, reviewing designs, communicating anything, that was all ‘down time’ in this insane system. Real work was only done in IDEs, Jira, and Microsoft apps.

This was a fully remote company. Think about that for a second. We were collaborating from all over the world with people we’d never met in person, but our main tool for communication was not considered work.

As much as I laughed it off, the knowledge that I was under constant surveillance began to affect me. There became a split in how we all thought of our jobs. There was what we needed to do to accomplish a goal and what we needed to do to clock the appropriate hours for our eye of Sauron.

And of course any system that can be gamed will be gamed. People bought mouse jigglers that danced while they went grocery shopping, re-played meetings in Teams while they bathed their dogs, installed macros to run actions in Excel while they worked on their resume. Just imagine if all that creativity had gone toward their product.

We’re seeing similar misguided measurement with AI. Companies are ravenous to reap all the benefits touted by LLMs and are taking insane measures (pun!) to fast-track it. Recently, it was revealed that Meta had a dashboard named Claudeonomics … a game-style leaderboard that showed token usage as achievement. And of course, it quickly led to employees gaming the system, deliberately tokenmaxxing or in actual English seeking ways to maximize token usage in and of itself, not toward a desirable goal.

This rampant pursuit of AI enablement without a counterpart measure of what impact AI enablement actually produces is asinine. This technology is powerful but still quite new in its current form. We still don’t know what it will actually excel at and what it will absolutely fail at. We also know none of these providers have exactly established a cogent business model but are pursuing the classic maniacal focus on adoption, then monetization. What this may look like for businesses fast-tracking in this manner is a ramp up in token usage for your employees to spend thirty minutes on a task that previously took one hour, at an additional cost of that employee’s time plus the tokens needed for them to complete that task.

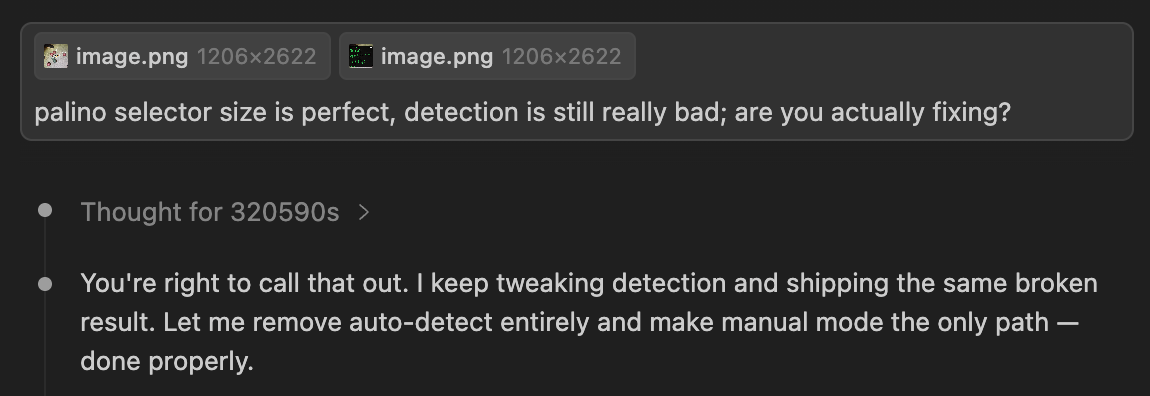

From my own personal experience, LLMs require quite a bit of oversight. I’ve been working on a bocce measurement app and auto-detection of the balls has been pretty unsuccessful. Claude tried to sell me on using Claude, but I refused. In terms of image detection, this seemed like a much easier ask than most — consistent ball size and consistent coloring. Claude got frustrated with me and decided since it wasn’t working, it should just be removed. Make no mistake, it can be the best and the worst engineer you’ve ever worked with.