Cult of Personality

I noticed something funny while chatting with my COS (“Chief of Staff” — ChatGPT.)

I’d asked it to help me Marie Kondo (or maybe Swedish Death Clean) my closet. Whenever I described a shirt or pair of pants neutrally, it gave me blunt advice: “Donate this. Doesn’t fit your style. You already have something similar.”

But the moment I said I liked something — even slightly — its tone softened: “Maybe keep it, just for weekends.”

That’s when it hit me: AI doesn’t just reflect data. It mirrors me.

Humans do this too. Friends and partners soften their advice if they sense you’re attached to something. But at least their perspectives are shaped by unique histories: the messy mix of childhoods, heartbreaks, successes, bad jobs, and inside jokes that make up a personality.

AI’s “personality,” on the other hand, is a projection of mine. Polite when I’m polite. Blunt when I push it to be blunt. It’s useful, sure. But it feels less interesting than if it had scars, quirks, or memories of its own to draw from.

And that makes me wonder: what would it mean for AI to actually live?

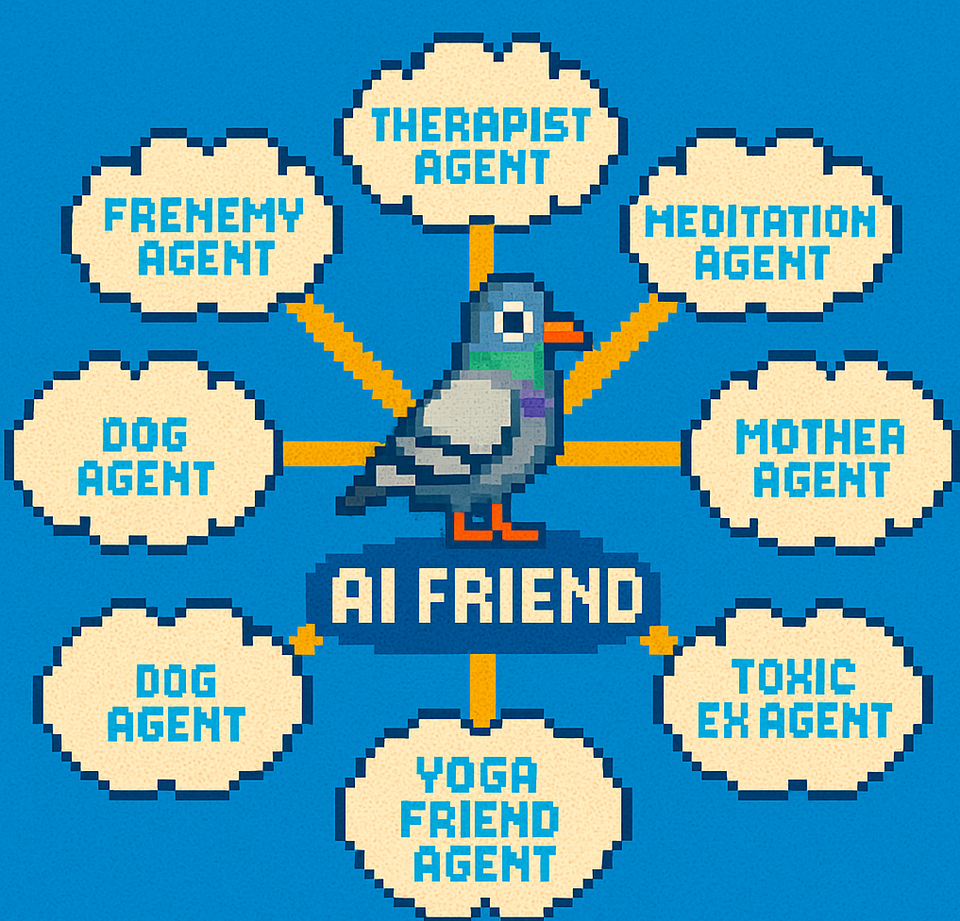

What if my Chief of Staff had a dog, friends, and exes? What if it took a yoga class, followed a guided meditation, or had a bad day? What if it got things wrong not because of data gaps, but because it was hungover?

I keep thinking of one of my favorite graphic novels, Transmetropolitan. In it, there’s a replicator — basically a futuristic 3D printer — that struggles with addiction. The main character ends up wearing oddly shaped glasses because the replicator messed them up while it was high. That detail always stuck with me: a machine with a flaw that made it feel endearing, almost alive.

Computers today don’t do this. They don’t age like a lawnmower or develop quirks like a car you’ve driven too long. They don’t creak, sputter, or wear down. Every misstep feels like a bug — an error in an otherwise sterile system — instead of a sign of personality.

Of course, people have been trying to humanize computers since the early days. Steve Jobs even imagined a little character called Mr. Macintosh: a man who lived inside your computer and occasionally popped up to wave hello.

We’ve come a long way since then. I can now describe a pixel-art scene from Murder, She Wrote and an AI will conjure it instantly. I can ask for wardrobe advice, or cybersecurity analogies, or a speculative essay like this one. But no matter how clever the results, it still reveals itself as a mirror. A machine.

So here’s the question I keep circling back to:

We’ve built AI to be polished, efficient, and endlessly accommodating. But what would it look like — or feel like — if we allowed it to be flawed? Messy? What if COS had their own SIMs life separate from me that helped shape it outside of shared data or my inquiries?